For years, Apple has cultivated an image of unwavering commitment to user privacy, a cornerstone of its brand identity. This dedication has even influenced the integration of AI into its devices, sometimes at the cost of performance, as the company prioritized on-device processing. However, a recent discovery surrounding iOS 18’s “Enhanced Visual Search” feature within the Photos app raises serious questions about whether this commitment is as steadfast as we believe.

The “Visual Look Up” feature, introduced previously, allowed users to identify objects, plants, pets, and landmarks within their photos. This functionality enhanced search capabilities within the Photos app, allowing users to find specific pictures using keywords. iOS 18 brought an evolved version of this feature: “Enhanced Visual Search,” also present in macOS 15. While presented as an improvement, this new iteration has sparked a debate about data privacy.

A Deep Dive into Enhanced Visual Search: How it Works and What it Means

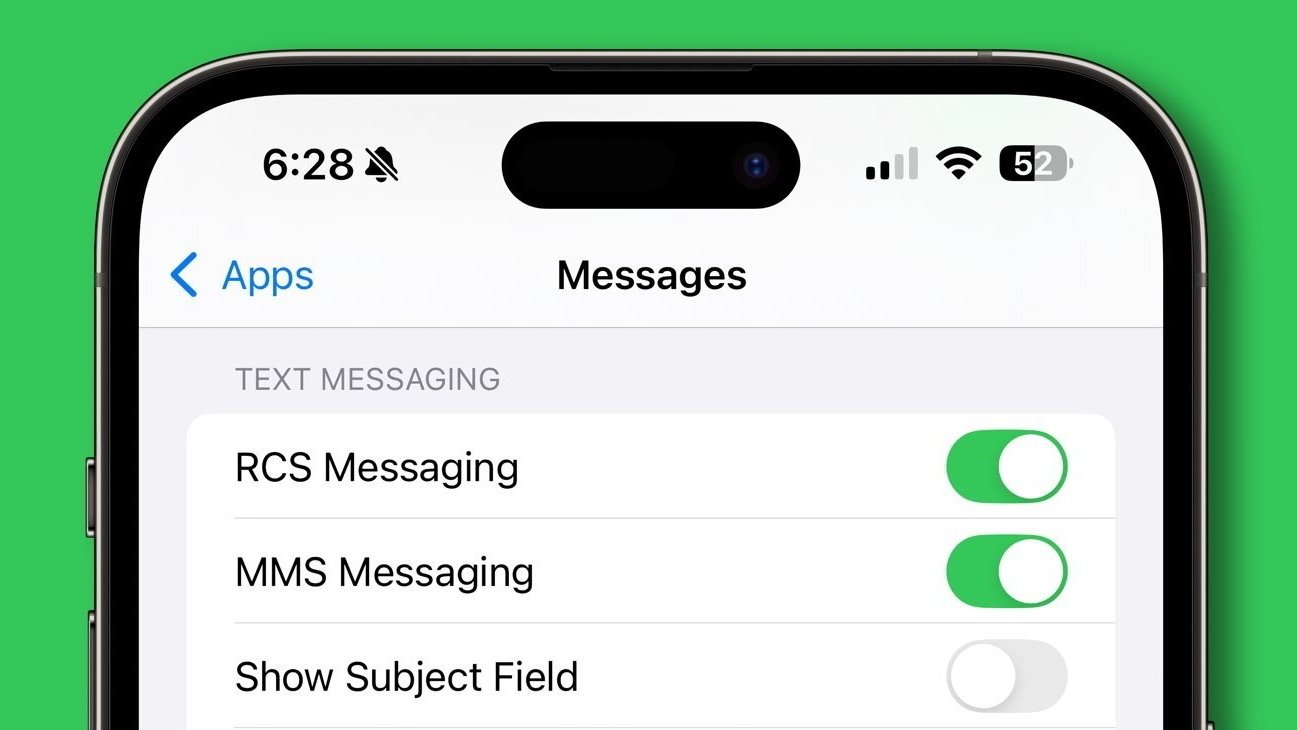

The Enhanced Visual Search feature is controlled by a toggle within the Photos app settings. The description accompanying this toggle states that enabling it will “privately match places in your photos.” However, independent developer Jeff Johnson’s meticulous investigation reveals a more complex reality.

Enhanced Visual Search operates by generating a “vector embedding” of elements within a photograph. This embedding essentially captures the key characteristics of objects and landmarks within the image, creating a unique digital fingerprint. This metadata, according to Johnson’s findings, is then transmitted to Apple’s servers for analysis. These servers process the data and return a set of potential matches, from which the user’s device selects the most appropriate result based on their search query.

While Apple likely employs robust security measures to protect this data, the fact remains that information is being sent off-device without explicit user consent. This default-enabled functionality in a major operating system update seems to contradict Apple’s historically stringent privacy practices.

The Privacy Paradox: On-Device vs. Server-Side Processing

The core of the privacy concern lies in the distinction between on-device and server-side processing. If the analysis were performed entirely on the user’s device, the data would remain within their control. However, by sending data to Apple’s servers, even with assurances of privacy, a degree of control is relinquished.

Johnson argues that true privacy exists when processing occurs entirely on the user’s computer. Sending data to the manufacturer, even a trusted one like Apple, inherently compromises that privacy, at least to some extent. He further emphasizes the potential for vulnerabilities, stating, “A software bug would be sufficient to make users vulnerable, and Apple can’t guarantee that their software includes no bugs.” This highlights the inherent risk associated with transmitting sensitive data, regardless of the safeguards in place.

A Shift in Practice? Examining the Implications

The default enabling of Enhanced Visual Search without explicit user consent raises questions about a potential shift in Apple’s approach to privacy. While the company maintains its commitment to user data protection, this instance suggests a willingness to prioritize functionality and convenience, perhaps at the expense of absolute privacy.

This situation underscores the importance of user awareness and control. Users should be fully informed about how their data is being used and given the choice to opt out of features that involve data transmission. While Apple’s assurances of private processing offer some comfort, the potential for vulnerabilities and the lack of explicit consent remain significant concerns.

This discovery serves as a crucial reminder that constant vigilance is necessary in the digital age. Even with companies known for their privacy-centric approach, it is essential to scrutinize new features and understand how they handle our data. The case of iOS 18’s Enhanced Visual Search highlights the delicate balance between functionality, convenience, and the fundamental right to privacy in a connected world. It prompts us to ask: how much are we willing to share, and at what cost?